You can find me on substack now 🙂

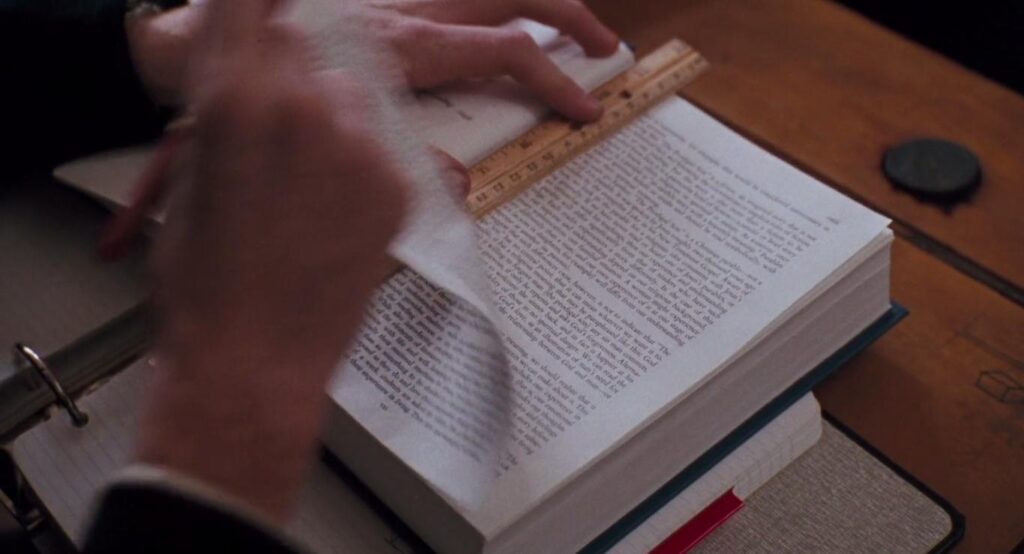

In the movie Dead Poets Society, Robin Williams’ character, John Keating, opens his first class by asking one of the students to read out the introduction to their poetry textbook (link at the bottom, so I don’t lose you during the first sentence). The intro describes a way of evaluating poetry that is based on a simple model that uses “perfection” on the X-axis and “importance” on the Y-axis. Calculating the total area of the poem, so it says, yields the measure of its greatness. Obviously, our beloved Robin Williams couldn’t disagree more: “We are not laying pipe here. We are talking about poetry”. He asks every student to rip out the whole introduction, emphasising his rejection of this type of thinking, especially in areas where it is utterly useless or even harmful.

I am going to try to help you rip out that page today or, at the very least, crumple it.Not for poetry, but for your views on human cognition and decision-making.

When people think about choices and behaviour, many adopt a sort of utility, cost-benefit, and model-style thinking. A good friend of mine (an economist) and I recently argued about the way we make decisions following his hypothetical example: “Should I”, as he put it, “tell the bastard next to me at the stadium to shut up, since he’s harassing players, or should I just keep it to myself?”. For him, that was a question of evaluating different outcome scenarios and weighing his discomfort of making a scene or possibly escalating the situation against his desire to stand up for what he thinks is right.

While this involves utility-style thinking and is clearly a form of cost-benefit analysis, it works without numbers and instead relies on a vague weighing of likelihood and preference: “Which outcome is best for me? I will pick the option that is overall most preferable”. There are many problems with that sort of thinking, and they exist on multiple levels.

There are problems within the utility model and especially with its assumptions, but most importantly there are big problems in adopting this model and its thinking.

The Preference Blender – Problems within the Model

A fundamental problem with utility-based thinking is that it mashes all sorts of different elements together and flattens them under the name of “preferences”. It compresses values, identity, emotions, bodily and neurological processes, temporal constraints and situational contexts into a single metric and pretends that they are at least somewhat quantifiable. These considerations can then be construed into different outcomes. In this case: speaking up and taking a risk versus not speaking up, but avoiding potential aggression and causing a scene. We pick the one we think we can live with better, or that avoids more pain (sprinkle some loss aversion), which in this case is maximum utility, and then move on.

These preferences, however, can change very quickly. Priming effects show how easily we can manipulate decision-making processes without any awareness of the decision-maker. Just by changing the name of the “dictator game” to the “cooperation game”, for example, we can change the odds of people cooperating versus defecting, despite it being the exact same game. If they had a better grasp of their true preferences, their performance within the game should not be so easily swayable.

A behavioral economist might say that these are just reasons to improve the model or that true rationality cannot be achieved and that we are all fallible, irrational and biased beings, since we are human. But that we should nevertheless strive for rationality and that behavioral economists should help people to achieve it by either teaching them or nudging them towards their own happiness by utilizing their own self-interest for their own benefit.

If you are not a lost cause, you might ask yourself: Who is actually thinking this way? Only striving to maximise their own benefit and using it as the sole guidance for their decision-making. The answer is: most people in power and their advisers.

At LSE I went to a talk with Cass Sunstein, one of the most influential policy scholars of all time. He co-authored Nudge, which sold millions worldwide and led the White House regulatory apparatus under Obama. He presented his new research on the “barbie problem”, a term he invented for the phenomenon in which people would prefer to have a product not exist, but still prefer consuming it over not consuming it. Say there is a party, for example, and you don’t want to go. There are the options of 1) not going to the party, 2) going to the party and 3) magically making the party disappear so that you do not miss out but don’t have to go. To Cass, that is a question of revealed preferences through choice. If you select to go to the party, to him it means the “fear of missing out” outweighed the “not wanting to go”. If you stay home, the “not wanting to go”outweighs the “FOMO”.

When pushed by my friend Ben on whether there might be other explanations for wanting the party to happen but staying home, like wanting your friends to have a great party but not wanting to go yourself, Sunstein blinked about 15 times, said “hmm… yes” repeatedly for about a minute and seemed to have a complete reboot before answering. Turns out they never ask anyone why they make the decisions they make, they just infer possible explanations under the assumption of pure self-interest.

Not asking participants about their intentions is a limitation of quantitative research in general, but it becomes particularly problematic under the technocratic assumptions of (behavioral) economics, when we get all the information from the flow of money or revealed preferences. The calculative style of their research makes their work seem beyond all suspicion. Numbers after all, don’t lie. Their work, however, is deeply influenced by their own worldview and based on faulty assumptions.

Minds as Machines and Maximizers – Problems with the Assumptions

Assumption 1: The Computer Metaphor

According to the economic model, we need to be able to access and pinpoint all these processes inside ourselves in order to make a “rational” judgement, and do so at rapid speed. It follows the logic of the computer metaphor, in which we could, like an Excel spreadsheet, intuitively weigh possible options, calculate them, and then make an informed decision. Even when we have all the time in the world, it is rarely that simple. If it were, Ted would never have struggled to decide which girl to go out with after whipping out his pros and cons list. After all, Ted should have had all the data available, and he even had ample time to make his decision.

Especially when we are forced into immediate action, the decisions we make are often pre-reflective and only become rationalised through post-hoc reflection. The computer metaphor misrepresents cognition as serial calculation rather than embodied, situational action. It fails to recognise that we often discover our preferences through action, not prior to it. The model wrongly assumes that preferences always precede choice.

As much as some people might want to be computers, we (un)fortunately do feel things, and we are situated in the environment with our bodies, which fundamentally shapes our cognition. Emotions are not merely a variable within a decision-making process. They can be the very foundation on which the decision is made.

Assumption 2: We Are All Assholes

Another assumption the model relies on is that people are purely self-interested and always wish for the best outcome for themselves. For many people, this sits at the core of how they understand human behaviour, but let’s try to make a quick case against it (for a longer one, read Human Kind or look around you).

If, for example, I had just spent a lot of time thinking about ethics, maybe I had read about virtue ethics and wanted to practise what they preach, wouldn’t that strongly incentivise, or even demand, that I speak up for my convictions and tell the guy to shut the fuck up?

Now I hear the economists shouting from the back: “Well, but you certainly derive pleasure from that, don’t you? You’re just doing this because you couldn’t live with yourself if you acted against your values, and acting in accordance with them makes you feel good.” This is the trump card of arguing for constant self-interest.Everything you do, no matter how altruistic it might seem, you do only because you ultimately derive pleasure from it. That’s just human nature.

Actually, that’s just, like, your opinion, man! It is your Menschenbild.

Let’s return to the example and falsify a borderline unfalsifiable claim. What if we knew that this guy was aggressive and we therefore expected to get punched, or worse, expelled!? If we still spoke up, would that mean that we hated ourselves so much for possibly not speaking up that it would outweigh the potential pain of a broken nose? Maybe. Do we just love pain? Possibly.

But maybe we had just read about Rosa Luxemburg and how she was killed for standing up for her beliefs, and that somehow made it feel wrong to chicken out. Or maybe we remembered this one football fan who shouted “Halt die Fresse” at a Germany game, when some wanker started singing an the German anthem during a minute of silence for the victims of a shooting. Maybe that inspired us to take action ourselves and do the right thing.

Hmm. But that guy got loads of applause and was interviewed by BILD. Maybe we just want a little bit of that fame for ourselves. Quick side note: why do we so desperately want to believe that everyone, including ourselves, sucks?

Let’s take this up a notch. What about a mother sacrificing herself for her children? Economists from the back: “Well, because she couldn’t live with herself if she didn’t save her kids, obviously.” Okay. Then what about the soldier throwing himself onto a grenade to save his friends? Or a student running towards a school shooter? Or a kid running into traffic to save a kitten? Does anyone really think these people weighed potential outcomes and risks and said, “Let’s try to get killed here, because running away would be worse”?

Maybe they had a subconscious death wish? Maybe. Or maybe we need to accept that people function in more complex ways than a balance sheet, that decisions can be pre-reflective, and that some people might actually do things because they feel they are the right things to do, not because of ulterior motives or selfish preferences.

Thinking Like a Spreadsheet – Problems with Treating the Model as a Guide

Even if you disagree with everything I have said so far, I need you to stick around for this part.

The biggest problem with the utility model is not that the model itself is bad, but the general overreliance on model-based thinking. We need models to make predictions, but a prediction machine must not be mistaken for an accurate depiction of human thought. This is often forgotten, even though big daddy Keynes told us explicitly:

“Every model is wrong but some are useful”

This is the core problem: a model is always just a model. It is meant to depict reality in a simplified way, but in this case it becomes a guiding principle that shapes how people think and act. In aspiring to be more “rational”, people begin to integrate the model into their own thought processes, adopting its assumptions wholesale and without reflection. The economic framing and the assumptions it is built on turn into a self-fulfilling prophecy. The more pervasive cost-benefit thinking becomes, the more accurately it seems to describe reality, not because people have always thought this way, but because more and more people start to think in its terms. What begins as reductive description turns into actual reality.

The model reproduces its own assumptions within our thought processes, until our thinking becomes a simulacrum of itself. Rather than imperfectly describing how people think and act, it becomes an internalised view of how people assume they already do. Trying to think like a spreadsheet by weighing and calculating all options pushes us into overthinking, away from our bodies and deeper into our heads.

As Sydney J. Harris said:

“The real danger is not that computers will begin to think like men, but that men will begin to think like computers”

Not life imitates art, but life imitates computer – how sad.

Recovering Ethics – Choosing Character

So what’s the way out, then? If I only think in cost-benefit scenarios to derive maximum happiness for my own little me-corporation? A purely utility-maximising approach would probably have left us without most revolutionaries, and it would make genuinely good actions far rarer. Very few people would take real risks, stand up against injustice, or sacrifice comfort for principle if every decision were filtered through expected personal payoff.

Let us look at some of the OGs and posit a different perspective. According to Aristotle and other ancient greek philosophers a good life does not rely on or seek pleasure. Pleasure is the byproduct of acting in accordance with character. You don’t act virtuously because it feels good. It feels good because it expresses a settled disposition and shows alignment with yourself. For the economists among us: Think of Goodhearts law:

“When a measure becomes a target, it ceases to be a good measure.”

You heard it. According to one of your own, maybe give not trying to maximise pleasure at every turn a shot and instead try to live a life more in accordance with your virtues. It might make you feel a little less dead inside. But what are your virtues?

You have to ask yourself: What values are you practicing when you don’t speak up? Cowardice, comfort, #riskaversion? Does that make you feel good and aligned, or does it make you feel uneasy and ashamed, maybe even a little disgusted with yourself? What virtues seem to want to pour out of you at times, but you suppress them with cost-benefit thinking until the moment to do or say something has passed? Who do you admire, not for what they have, but for who they are and how they act? And why?

While you sit with that, I will offer a deeper reflection and a more concrete “how-to-virtue” guide in my next post (ultimate virtue signaling).

Thank you for your attention!

As promised: Robin Williams

Leave a Reply